Once we have a standard server build of Ubuntu 16.04 LTS running for our cloud admin host, we need to start configuring the cloudy bits themselves.

To start with, we want to configure the interfaces we’re going to use on the VM. I set mine up with some static IP addresses in /etc/network/interfaces like so:

# This file describes the network interfaces available on your system # and how to activate them. For more information, see interfaces(5). source /etc/network/interfaces.d/* # The loopback network interface auto lo iface lo inet loopback # The primary network interface auto ens4 iface ens4 inet static address 10.232.8.121 netmask 255.255.255.0 gateway 10.232.8.254 dns-nameservers 10.232.8.2 10.232.8.254 dns-search melbourne.eigenmagic.net # Internal cloud universe IP auto ens3 iface ens3 inet static address 10.75.0.1 netmask 255.255.0.0 gateway 10.75.0.1

Network Booting

We’re going to be running a bunch of VMs that will stand in for physical/virtual hosts in our cloud. I’m not sure exactly what kind of physical topology I want to simulate in the lab, but we do want to be able to automate it as much as possible, so let’s set up our cloud admin server to serve as a boot host.

This is very reminiscent of the way I used to use Solaris Jumpstart back in the late 1990s and early 2000s. We set up the main server to serve as a networking booting host, and then any of our cloud hosts that are on the right network will boot and self-configure.

I had to do a lot of reading and playing around to find out how this works with Kubernetes, as it looks like there are several similar but ultimately different ways this can be achieved. Overall, we want to do this: https://github.com/coreos/coreos-baremetal/blob/master/Documentation/network-setup.md but I’ll detail the steps I went through to achieve a minimal boot and configure.

Create Shell VMs

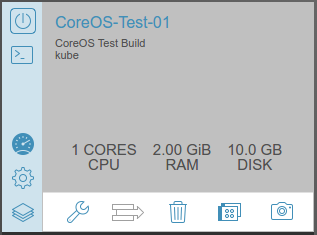

First of all we create some shell VMs in the Scale Computing interface. This is similar to having some physical servers racked and stacked but without personalities. We’re going to use persistent boot configuration, which means they need storage to store state on, rather than re-imaging themselves from scratch on every boot. The storage will be local to each VM, basically like having a disk inside each host.

We use the Scale interface to create three VMs that will become our initial Kubernetes cluster. I’ve given them just 10GB of disk because I don’t really know what the storage will be for, and we’ll be rebuilding these things a lot as we experiment, so we can always make it bigger later. We’ll be using CoreOS (now called Container Linux) for the operating system, because it’s well integrated with Kubernetes, and other than that it doesn’t really matter what the OS is.

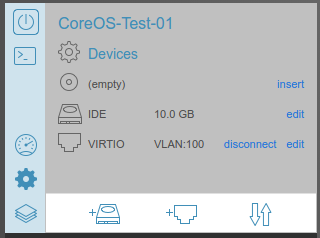

Note that I’ve selected the IDE type for the storage driver. CoreOS doesn’t appear to deal well with the VIRTIO driver on the Scale gear and the disk doesn’t show up as a /dev/sdX file unless the type is IDE.

Also note that there’s only a single network interface, on our cloud VLAN of 100. These VMs will only be able to see the cloud admin server, not the rest of the lab.

Boot Chain

The next stage of our configuration all takes place on our cloudadmin server. We need a series of tools to set up a boot chain, that runs in this order:

- VM powers on

- VM loads inbuilt PXE client and tries to boot from network

- VM uses PXE client to get an IP address via DHCP from cloudadmin

- PXE client then downloads boot image from cloudadmin

- Client boots and then fetches bootcfg information, which triggers and Ignition install/build

- Client builds itself using Ignition configuration

It turns out that the Scale Computing version I have uses an old PXE boot client that doesn’t support iPXE, which is a drag. To work around this, we get the PXE client to download and run an iPXE client image. You can read more about how this works here: http://ipxe.org/howto/dhcpd

Get the Software

The software packages we’ll need for this are as follows:

- isc-dhcp-server, for the DHCP server

- pxelinux, provides the pxe server stuff we need

- tftpd-hpa, provides a TFTP server

- The CoreOS baremetal package: https://github.com/coreos/coreos-baremetal

CoreOS

Get the CoreOS baremetal package by cloning the Git repository from Github:

git clone https://github.com/coreos/coreos-baremetal

Once that’s done, we want to grab the compiled CoreOS packages we need like so:

$ cd coreos-baremetal/scripts $ ./get-coreos stable 1185.3.0 ./

This will grab the the Stable version 1185.3.0 of the CoreOS boot image and place it in ./coreos/1185.3.0/

Install the other packages using APT:

sudo apt install isc-dhcp-server pxelinux tftpd-hpa

Configure DHCP For Booting

We need to set up DHCP so that our VMs can network boot. The file you need to edit is, on cloudadmin, /etc/dhcp/dhcpd.conf.

In the main body of the file, uncomment this line to permit network booting:

allow booting;

We also need to configure the network we want to boot in. This is one reason we have a separate interface for our cloud network on the cloudadmin server; to make it easier to keep the cloud network traffic separate from the lab uplink.

# We want to dynamically detect new hosts coming online and

# PXE boot them as CoreOS instances when they do

subnet 10.75.0.0 netmask 255.255.0.0 {

range 10.75.0.2 10.75.255.254;

option broadcast-address 10.75.255.255;

option routers 10.75.0.1;

option domain-name-servers 10.75.0.1;

if exists user-class and option user-class = "iPXE" {

filename "http://bootcfg.eigencloud.eigenmagic.net:8080/boot.ipxe";

} else {

filename "undionly.kpxe";

}

}

This configuration sets up 10.75.0.0/16 as the network that we will boot devices in, and sets the IP range for DHCP allocated IPs to anything in that network other than 10.75.0.1, which is our cloudadmin server, and 10.75.255.255 with is the broadcast address.

Then we have a little bit of logic that defines how we set up our chainload of PXE to iPXE booting. The iPXE client will set a user-class parameter with value iPXE if it’s an iPXE boot loader. If it isn’t iPXE, then we make the client load an iPXE bootloader via TFTP with the filename “undionly.kpxe” line, which will then contact the server again and then get the iPXE filename (on which more later).

Where does this file come from? You can get it from here, and read a more detailed explanation of how this works. The default installation of hpa-tftpd expects the file to be in /var/lib/tftpboot.

You can download the file directly into place like this:

$ cd /var/lib/tftpboot $ wget -nd http://boot.ipxe.org/undionly.kpxe

In my next post, I’ll go into how the next phase of the VM boot process works and how to configure bootcfg to get a minimal VM installed and running.

Pingback: Homelab Kubernetes Setup - Gestalt IT