My article went live on iTNews late on Saturday night, and it got picked up by Slashdot yesterday. As we saw, the predicted results were as good as expected, and got more accurate the higher up the order we went.

As promised, here’s a more detailed explanation of how I replicated the methods used by Nick Drewe, based on a verbal description of his methods.

Get Tweets

The first step was finding a search tag for the Tweets that people shared after they voted. The phrase I used was “triplejgadget.abc.net.au”, which gave me plenty of results using Twitter’s advanced search page.

Nick used the iMacros plugin for Firefox to automate things, but I’m a coding type guy, so I fired up Python and used Twitter’s API (via the python-twitter package) to access the search API.

You can download my script here.

The downside to this method is that Twitter’s search only returns data from up to about 7 days old, and often less. Because I ran my script on the Wednesday after voting closed, I wasn’t able to find more votes, which limited the predictive accuracy of my sample.

Clean Up

I took the results from this script and ran them through some simple unix commands to remove duplicates: sort and uniq.

Then I was ready to grab all the pages from the list of URLs I’d collected. I checked them manually to see what the pages looked like, and wouldn’t you know it? TripleJ had handily tagged the artist and song right there in the HTML, so parsing the data out would be a lot easier.

I used wget to grab all the files with a simple script that paused for a second between file grabs so that I wouldn’t clobber the TripleJ website, and placed them all into a directory for parsing by my next script.

While wget was merrily downloading the files, I wrote another script to parse them and spit out a CSV file of source page, artist, title and a number called “data_id” which seems to be what TripleJ used to code the unique artist/track combination for their vote database.

You can download this script here.

Statistics

Lastly, I used the statistical programming language R to process the data and spit out some statistics. The Warmest guys just used a spreadsheet, but I love an excuse to play with R, and I learned a few new things, which made it worthwhile.

You can grab my R script here.

Results

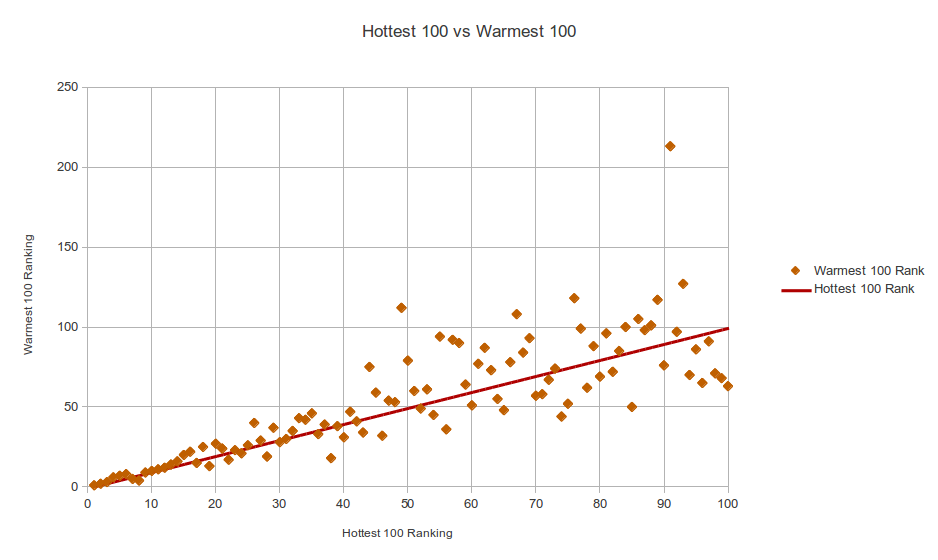

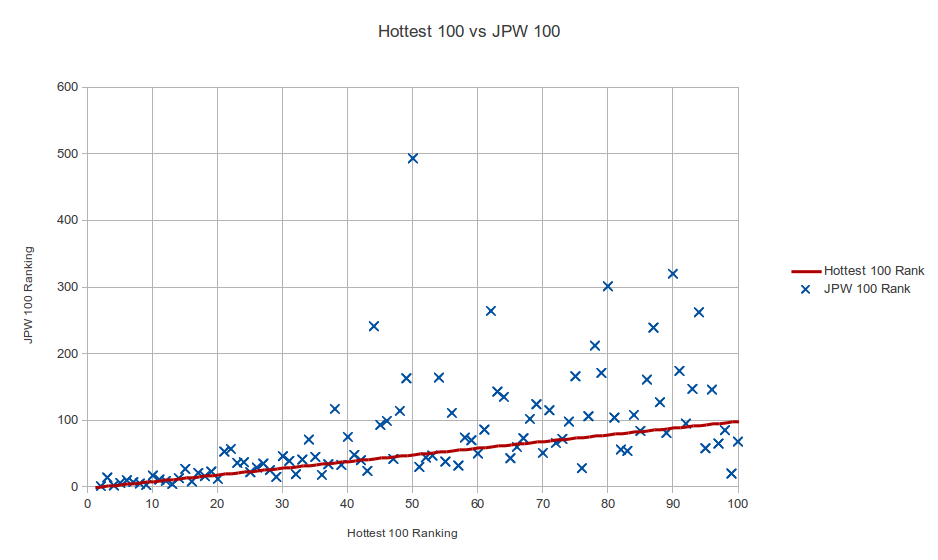

The data I collected was a much smaller sample size, 1590 votes, but it was more accurate than I thought it would be. It correctly predicted 72 of the Hottest 100 songs, and got 4 direct hits, including the number 1 song. I had Little Talks at number 14, so I guess people voted for Of Monsters and Men earlier. That meant that I had Breezeblocks at number 2 instead of 3, and only got 6 of the top 10 correct (though not in the right place). I got 4 wrong in the top 20, while the Warmest list got 3 wrong.

Considering that I had about one-thirtieth of their data, that’s pretty good. This is a good demonstration of the diminishing returns you get with sample size in statistics. That extra bit of accuracy takes a lot more data. (It’s inversely proportional to the square root of your sample size).

I posted these charts on Twitter that show how the accuracy of the two data sets got better as we closed in on number one.

All For Now

Well, that was fun!

I hope you learned a few things about statistics. I sure did.

It’s enormous that you are getting ideas from this piece of writing

as well as from our dialogue made here.